When starting – or advancing – in one’s DevOps journey, one of the first things we want to do is ask ourselves: How will we know if we are making any progress?

Well, the answer should be rather easy: We measure it. As the famous Austrian-American economist and management scholar Peter Drucker had said, “You can’t improve what you don’t measure.” But there is also an accompanying quote, which says: “When measurements become an end in and of themselves, they consign themselves to irrelevance.” Or in other words: Don’t measure just for the sake of it. There needs to be a purpose to the KPIs we are measuring.

Therefore, the real question we need to ask ourselves should always be, “What should we measure?”. This is where things can get a bit complicated. Or at least, this is where they used to, before the gold standard of measuring DevOps was established.

What is the industry standard (and why) for measuring DevOps performance?

When talking about measuring DevOps, there is no way around talking about the 4 DORA key metrics first . When introducing these measurements to our projects at Nagarro, we have found the 4 DORA metrics to be great starting points for any project we are supporting. Irrespective of the context, these metrics have consistently provided valuable insights to evaluate where the biggest gaps and areas for improvements in the Software Development Life Cycle (SDLC) are.

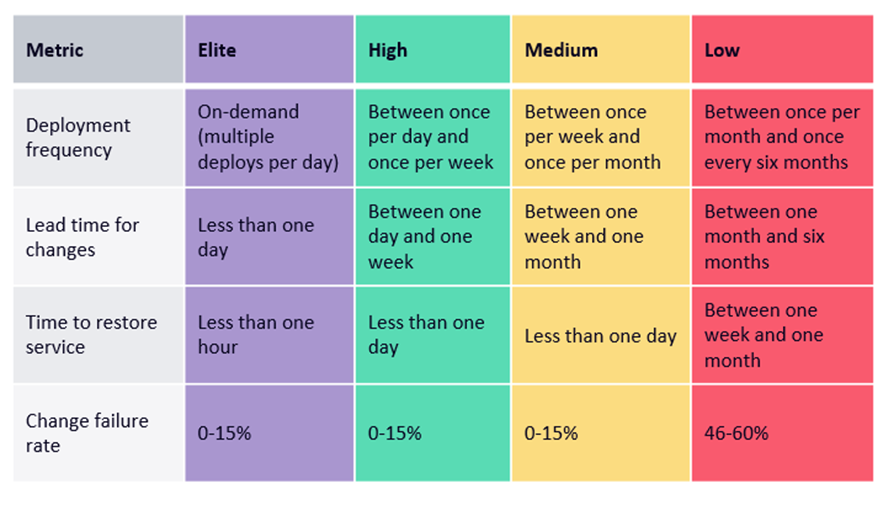

So what are these 4 DORA metrics? Let’s understand each of them:

- Deployment Frequency (DF) – This means how often code changes are being deployed to production. This number can range from multiple times per day to only a few times per year. Sometimes, this depends heavily on external factors like legal restrictions or necessity for updates. But, in general terms, we can say: The faster the better.

- Lead Time for Change (LTfC) – This is about the average time required to bring a change after code is committed to production. Again, this is typically a “the faster the better situation”, where top performers lie in the less-than-a-day range.

- Mean Time to Recovery (MTtR) – This relates to the average time required to fix major issues in production. It has (almost) become standard in any project to be able to react to and recover from major errors within one day. This is simply because in today’s fast-paced world, most companies cannot afford grave errors in production over a longer period.

- Change Failure Rate (CFR) – This is the percentage of deployments to production that result in severe errors, which require immediate attention. Usually, this means which fraction of production deployments result in either a hotfix or rollback.

A tabular representation of the DORA metrics to measure DevOps performance

A tabular representation of the DORA metrics to measure DevOps performance

On taking a closer look, we can divide these metrics into two groups: Metrics that measure throughput and those that measure stability.

DF and LTfC measure throughput whereas MTtR and CFR measure stability factors. Throughput metrics represent the health of your pipeline and possible issues in the release flow. On the other hand, stability metrics can reveal any issues in the quality and testing aspects of the software.

Both these groups offer a costly trade-off. Even if you might excel in two, or maybe even three of those metrics, you could still lack the flexibility that very mature DevOps projects promise:

- Excelling in throughput but lacking the necessary stability: It is easy to set up a project in a way that code changes will immediately be deployed to production. While this automatically leads to great numbers in measuring DF and LTfC, code quality will most likely suffer and TtRS and CFR will be ranking only amongst Low performers (or possibly even below that).

- On the other hand, you can put high effort (and cost) in quality assurance, which will stabilize the builds and will eventually result in a very low CFR and fast TtRS - but it will come at the cost of fast DF and LTfC.

In order to reach Elite performer status, it is imperative to improve on all the four key DORA metrics since they aim to cover almost every part of the SDLC in some way. They provide a solid foundation to identify any waste in your processes and improve the entire value stream.

This is where some of the base principles to improve the DevOps value stream come into play, where CI/CD, continuous improvement, focus on quality assurance and test automation, automated quality gates, monitoring, and at the end of the rainbow, possibly continuous deployment will help you shine!

At Nagarro, we have seen how more and more of our clients, projects and teams have advanced their DevOps performance over the years, across all industries. This is reflected by numbers that display the changes in the DORA DevOps survey from 2018 to 2021. According to the survey results, Elite performers rose from 7% to 26%. On the other hand, during the same period, low performers declined from 15% to 7%. DevOps has matured and arrived in almost all organizations and continues to break down silos between development, operations, and other stakeholders.

DORA has established the 4 key metrics and at Nagarro, we see them as a stable indicator of high performance and a great way to find room for improvement quickly – often when setting up measuring those KPIs, issues will surface that would otherwise have remained hidden. As a result, those metrics should be included in any measurement strategy that involves advancing the SDLC. However, they are not the only metrics one could and should measure.

Blog author Alexander Birsak in our "Let's talk DevOps" - video talk show on the topic of DevOps measurement

What else can you measure?

There is a short answer to this question: Everything.

Depending on your own specific project context and improvement areas, you can define any metric around the DevOps value stream.

- Are you unhappy with the time it takes from when you first hear about a feature to when you start developing it? Measure it!

- Does employee fluctuation seem too high? Measure it!

- Are the tests flaky? Measure it! (The flakiness)

- Too much production downtime? Measure it!

What is worth mentioning: There are lots of well-explored metrics out there that may or may not suit your need. It is important to remember that what goes for the four key metrics goes for every other KPI as well: Don’t lose focus from why and what you are measuring but ensure you also keep the focus on measuring progress instead of measuring people.

Why measure DevOps performance at all?

KPIs, their monitoring, and their interpretation – are enablers and drivers for change. As already explained in the introduction of this blog post, the only way to know if we succeeded in our endeavours of implementing or advancing the pipeline is if we have clear, specific goals to aim for. Goals that can be as broad as defined by the 4 key metrics or as specific as employee-satisfaction or employee-fluctuation.

At Nagarro, we often see within our consulting projects that metrics are being introduced with the right goal in mind and the right intent at heart. However, in the long term, the focus will shift from measuring progress to measuring the performance of individuals or teams. Therefore, it is vital that some of the big questions while measuring should always be kept in mind and the focus on the target itself should be reviewed. Some of these big questions are about: What are we measuring?, To what purpose?, How?, Who keeps track of it?, Who interprets and who challenges the numbers?

Conclusion

The four key metrics are the most important metrics that you need to know about, as they aim to cover almost every part of the SDLC in some way. They further provide a solid foundation to identify waste in your processes and will, if used correctly over time, improve your whole DevOps value stream.

Some of the most basic DevOps principles like continuous flow, test automation or CI/CD are among the best practices to improve these key DevOps metrics.

For everything else, context is more relevant than having generalized standards in place. There is no standardized quick way in advancing from being a High performer to an Elite performer. This is where expertise, KPIs, and a high focus on continuous improvement take up the heavy lifting.

I hope these insights will help you get started (or advance further) in your efforts to measure your DevOps ways of working. I'd love to hear about your experiences and your feedback or comments on this blog post. Please feel free to email me at alexander.birsak@nagarro.com