What if I told you that you’ve always had a raw, uncut diamond that only needed further quality and harnessing to shine even more luminously? And guess what, it’s not even any fictional hypothesis but a true story.

Having shifted gears from the workforce to machines and now technology, organizations have realized the importance of that secret gem: Data. It's been there all along, but now it's time to unleash its polished potential. Get ready to dive into this enlightening blog, where we'll explore the art of leveraging high-quality data to be a catalyst for boosting organizational growth.

Welcome to the realm of the modern world, where true worth lies not in the sheer quantity, but in the impeccable quality of data. As the British mathematician Clive Humbly had famously said, "Data is the new oil." However, the potential of both data and oil remains untapped until they undergo a transformative journey towards excellence. Amidst the race to become data-driven, organizations often overlook the paramount importance of data quality. Let's try to understand the indispensable role of data quality while exploring the true power of being a data-driven business.

Unveiling the significance of data quality in business success

A very insightful Gartner report from July 2021 revealed that organizations face an alarming average loss of around $13 million annually, which can be attributed directly to data quality issues. This immediate impact is undoubtedly striking. However, the repercussions of such issues, including complex data ecosystems and compromised decision-making, become evident much later, in the long term.

Findings from a survey conducted by Sage Growth Partners and InterSystems also point to a staggering truth: when it comes to making critical data-driven decisions, a mere 20% of organizations have confidence in their data.

Cracking the code: The 8Ws we need to know before building a winning test strategy

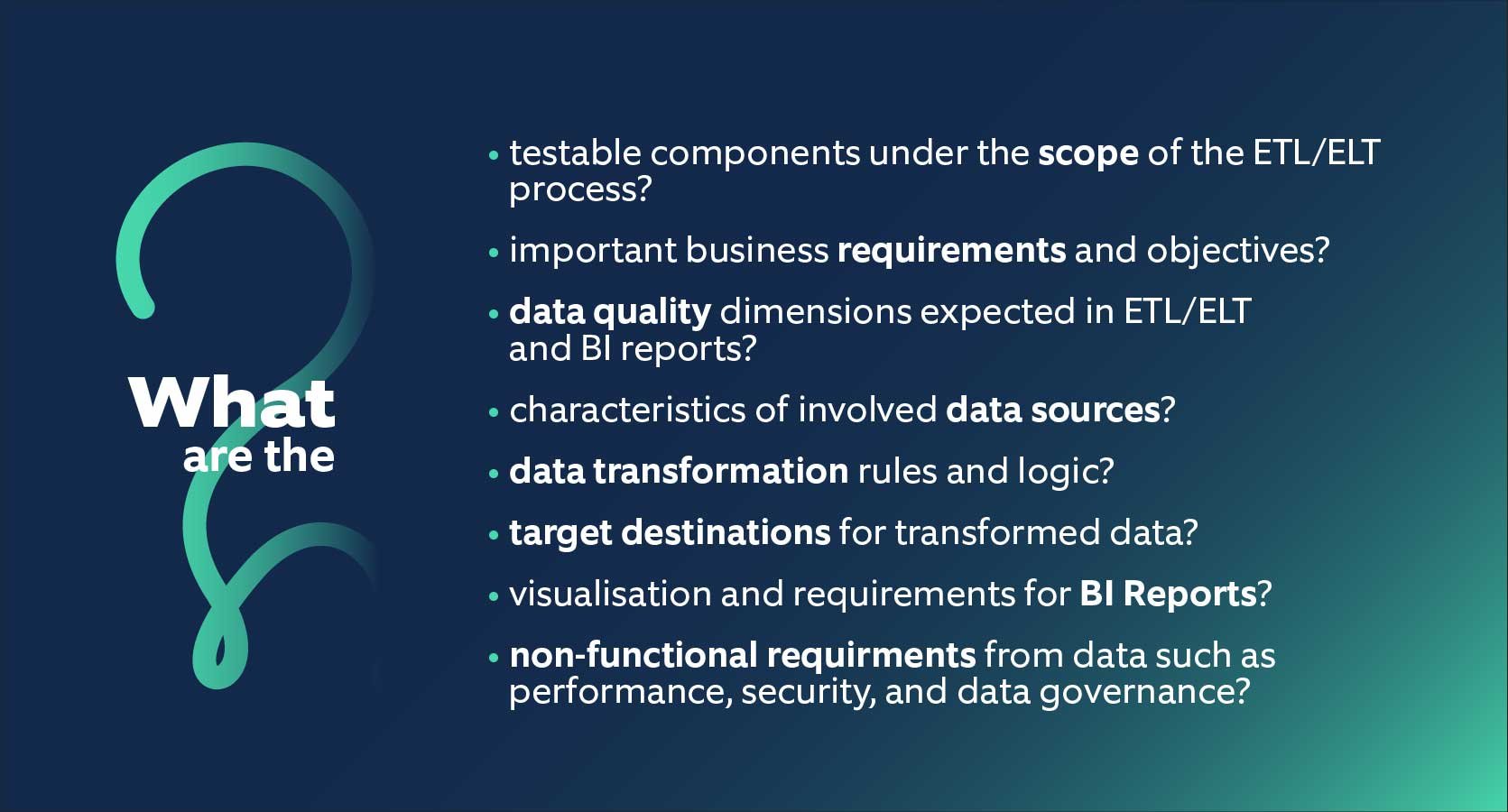

To address data quality concerns and establish trust in data insights, it is crucial to develop an effective test strategy that covers business goals and key factors. Diving into the 8Ws is critical in gaining in-depth knowledge and in building a comprehensive and successful test strategy for both the ETL/ELT process and BI reports. By thoroughly exploring these factors, organizations can ensure data reliability, make informed decisions, and unlock the full potential of their data-driven initiatives.

Figure 1: The 8Ws to know before building a winning test strategy

Figure 1: The 8Ws to know before building a winning test strategy

The resurgent PROVEN™ test strategy for unparalleled data quality

After gaining answers to the above-mentioned 8Ws while working for multiple domains, our QA experts began the quest for unparalleled data quality, and the results speak for themselves! We proudly present a groundbreaking rendition of PROVEN™ (crafted by Nagarro's formidable QA team, a team that has emerged as the epitome of testing excellence with the core belief that "Testing itself should face rigorous testing"). PROVEN™ has been meticulously tailored to cater to the dynamic realms of Big Data, ETL/ELT, and BI Testing.

Figure 2: PROVEN™ test strategy

Figure 2: PROVEN™ test strategy

Penetrative Understanding

- Scope identification and analysis

- Source & Data generation analysis

- Requirement understanding

- Data mapping analysis

- End-to-end data flow analysis

Resilient Planning

- Pragmatic test strategy and roadmap

- Risk based test planning

- Test data management planning

- Data Quality Dimensions and Standards validation planning

Orderly Coverage

- Data Mapping & transformation test scenarios for maximum coverage

- Test Data generation for validating each quality dimension

- 3 tier test review process by QA Lead, Data Analyst and Business Stakeholders

Verified Execution

- FREE (Forward Reverse Engineering Evaluation) strategy for detailed execution

- End-to-end data validation from source to target, then BI reports

- Reusable SQL scripts

- Validation of rejected and lost data

- Monitoring of ETL pipelines

- Data Analytics and Visual validations

Effective Reporting

- Strong defect management

- Detailed and customized test reports

- Action plan based on root cause analysis

- Quality metrics collection for continuous improvement

No-touch Training

- Comprehensive, precise and up to date training pathway

- Independent and rapid onboarding in the team

Data Quality Journey: Navigating the 4 Phases to Success

Drawing from our extensive expertise and resurgent PROVEN™, we highly recommend implementing a systematic data validation approach encompassing four key phases within the ETL/ELT process. In each phase, specific activities should be targeted to ensure comprehensive data validation and quality assurance.

To build a fortified ETL/ELT process and to pave the way for high-quality to generate reliable insights and informed decision-making, the activities identified in 4 phases will help in the following ways:

- Ascertaining data integrity, accuracy, and reliability.

- Ensuring the seamless execution of ETL/ELT pipelines.

- Upholding data governance standards.

- Ensuring usability, performance, and security within the BI reports.

Figure 3: Before ETL/ELT, during ETL/ELT, after ETL/ELT, Analytics

Figure 3: Before ETL/ELT, during ETL/ELT, after ETL/ELT, Analytics

Pre-ETL/ELT Validations:

1. Structure and Pattern Validation:

Thoroughly validate the structure and patterns of the source data to detect any anomalies or inconsistencies.

2. Data Quality and Reliability Validation:

Conduct meticulous validation to ensure the data quality, along with reliability of the data sources, uncovering potential issues that may arise.

3. Data Cleansing and Standardization Rule Validation:

Rigorously validate the rules defined for data cleansing and standardization, ensuring they effectively address any data quality issues.

4. Source Data Alignment Validation:

Validate that the expectations from the source data align with the specified requirements, guaranteeing a seamless match between the two.

During ETL/ELT Validations:

1. Data Cleansing and Logging Validation:

Validate the effective removal of duplicate and invalid data, while ensuring that pertinent information is appropriately logged using proven data cleansing techniques.

2. Data Integrity and Accuracy Validation:

Thoroughly validate the data integrity and accuracy of the transformed data, ensuring that it aligns precisely with the desired outcomes.

3. Error Handling Scenario Validation:

Validate the flawless handling of error scenarios that may arise during the execution of the ETL/ELT pipeline, guaranteeing a smooth and uninterrupted data flow.

Post ETL/ELT Validations:

1. Reconciliation and Reliability Validation:

Thoroughly validate the data reconciliation process, ensuring accuracy, consistency, and reliability within the targeted system.

2. Data Governance Standards Validation:

Validate compliance with data governance standards, if applicable, to ensure adherence to established data management practices and policies.

3. Successful ETL/ELT Pipeline Execution Validation:

Validate the successful execution of ETL/ELT pipelines, verifying that data is effectively transformed, integrated, and loaded according to defined specifications.

Analytics Validations:

1. Data Correctness, Completeness, and Transformation Validation:

Validate the accuracy, integrity, and completeness of the data, ensuring it undergoes appropriate transformations as required.

2. Data Calculation and Aggregation Validation:

Validate the accuracy and correctness of data calculations and aggregations, particularly for derived metrics and key performance indicators.

3. BI Reports Usability Validation:

Validate the functionality and usability of BI reports, including data filtering, sorting, navigation, and overall user experience.

4. Visual Data Representation Validation:

Validate the accuracy and effectiveness of data representation in visualizations, ensuring they convey insights clearly and accurately.

5. Performance Validation with Large Data Sets:

Validate the performance of BI reports and data processing capabilities when handling large data sets, ensuring efficiency and responsiveness.

6. Authentication, Authorization, and Data Confidentiality Validation:

Validate the robustness of authentication and authorization mechanisms, along with data confidentiality measures, safeguarding sensitive information.

Silent success: Our accomplishments speak through these inspiring stories

Unleashing Efficiency

Automation consultancy slashed the manual regression cycles by 90%, while detecting 40 critical defects in half-yearly data. Delivered data quality dashboards to empower DW stakeholders with complex BI requirements, compliant with healthcare domain rules and data quality standards.

Driving Efficiency

QA best practices and processes resulted in 50% reduction in test cycle time, ensuring 100% data validation across source, stage, target, and 50 BI reports post seamless data migration from 10 dispersed sources to a centralized DB-empowered energy domain client.

Empowering Success

Finance domain client achieved growth by retaining clients, attracting new opportunities, and meeting project deadlines with confidence through high-quality data warehouse and BI reports derived out of rigorous testing using 800+ test cases.

Seamless Migration

Validated 100+ TBs of data by analyzing 60+ DB Jobs utilizing 150+ test SQL scripts to empower the automotive customer's seamless migration from on-premises to cloud solutions.

Looking beyond the horizon: This is just the beginning!

Embarking on the journey towards data quality may seem a colossal mountain to climb, but fear not - success is within reach with proven tips to scale any summit! With our revamped PROVEN™ methodology that is specifically tailored to address the intricacies of business data requirements, we can conquer each layer of the ETL/ELT process. By diligently executing the activities outlined in the 4 phases, we can surmount any challenge, validate data integrity, ensure accuracy, and enhance reliability. Brace yourself to unleash the power of high-quality data to propel your business towards new heights of growth and prosperity. Interested? Get in touch and we’ll be happy to help!