nagarro

an outstanding digital

solutions developer and a

great place to work in.

relations

governance, reports,

announcements, and

investor events.

press releases

doing, and what people

are talking about.

sustainability

Learn about our

initiatives.

becoming a Nagarrian?

-

what we dowhat we do

- Fluidic Intelligence Nagarro's framework for AI transformation.

-

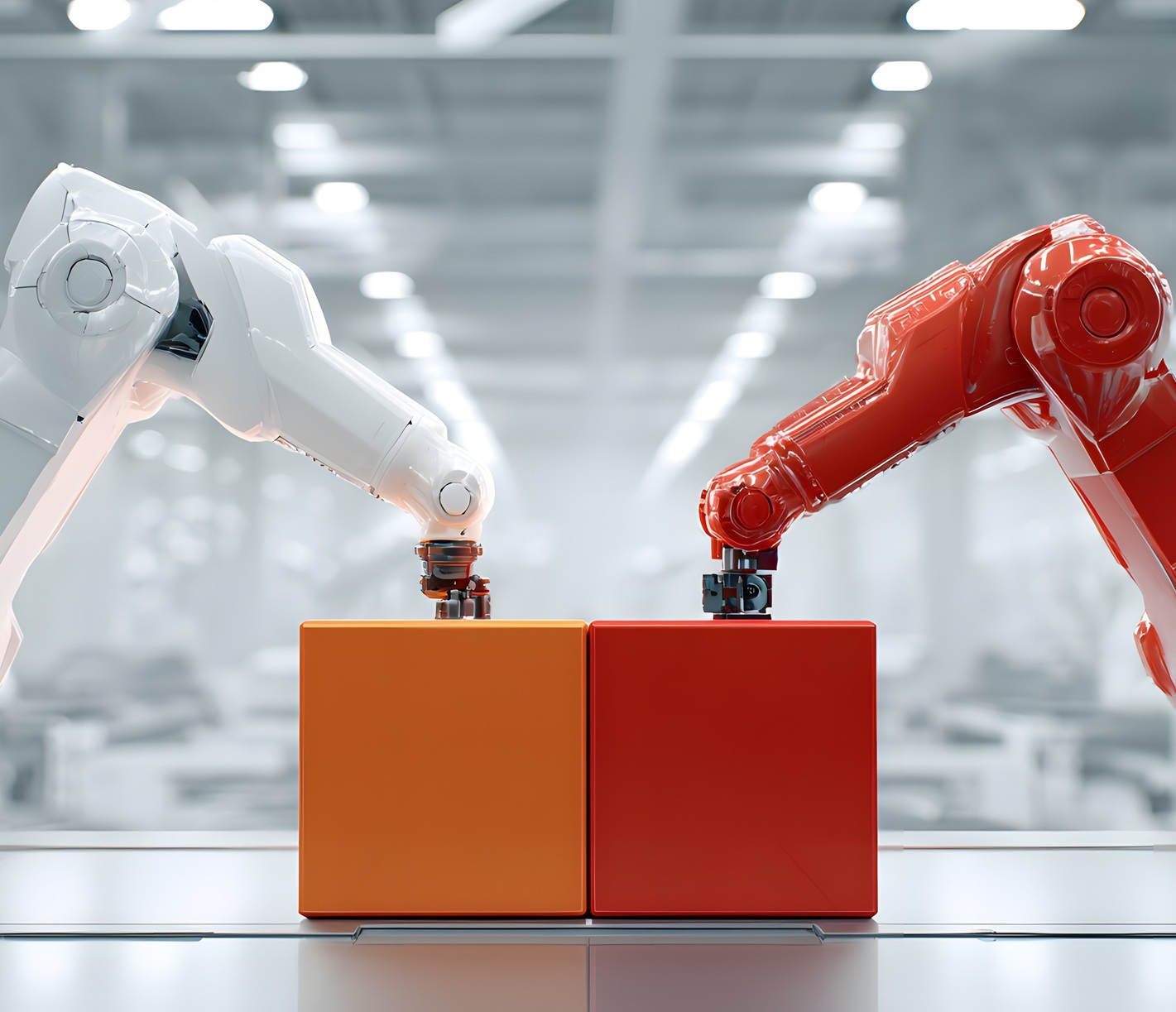

Digital Engineering

Value-driven and technology savvy. We future-proof your business.

- Intelligent Enterprise Helping you master your critical business applications, empowering your business to thrive.

- Experience and Design Harness the power of design to drive a whole new level of success.

- automotive

- banking and financial services

- energy & utilities

- gaming & entertainment

- industry and automation

- insurance

- life sciences & healthcare

- media & publishing

- non-profits and education

- private equity

- public sector

- retail & cpg

- siemens

- smart buildings

- software & hi-tech

- sports AI

- telecommunications

- travel and logistics

- our work

- insights

- AI

-

careersCareers

- get to know us

-

Africa & Asia-PacificcareersAfrica & Asia-Pacific

-

Central & South America

-

Europe

-

Middle East

-

North America

- contact us

- search

-

en

- privacy policy

- imprint